Large language models:

Positioned to transform underwriting and claims management

properties.trackTitle

properties.trackSubtitle

January 2024

In our previous article, we explored how Large Language Models (LLMs) work and identified considerations carriers must make when choosing to implement LLMs. This article series considers how LLMs could transform underwriting and claims management.

The insurance industry is no stranger to data; it thrives on it. Yet, a significant portion of this data is unstructured, trapped in documents such as attending physician statements (APS). However, that is quickly changing. Large language models (LLMs) have emerged as an accessible technology that can unlock these treasure troves of data and deliver previously difficult-to-access information and insights.

Over the years, businesses have produced more and more data at rapidly increasing rates. They’ve also realized that this data has an inherent value to businesses – it’s an asset that can be leveraged, not simply something to be stored. In a fully data-powered future, underwriting and claims functions might look totally different.

The problem, of course, is getting to the good stuff, and that means understanding the layers of data we capture on a day-to-day basis. Structured data is already machine-readable, containing specific information such as a policy ID, coverage details, or even a policyholder's contact information. Semi-structured data could include computer logs or electronic health records, where some but not all data is easily accessed. The most elusive layer is unstructured data, which may include attending physician statements, claims notes, and treaties. Unstructured data has the potential to be transformative if we can effectively harness it. Large language models have the potential to provide this ability.

What can LLMs do?

Generally speaking, LLMs are very good at recognizing context and synthesizing data. Based on these core strengths, they display an impressive set of capabilities, including:

- Unstructured data analysis

Documents can be summarized, compared, and quality checked – all using relatively easy but effective prompting to an LLM. For example, LLMs could summarize claim notes in a database and identify trends or patterns across claims. APS analysis could be accomplished more efficiently, allowing for the extraction and summarization of information that is most relevant to an underwriter. Treaties could be compared to understand any potential differences in wording as well as context. Open-source LLM models that are great at unstructured data analysis include LLaMA, BLOOM, Falcon, and MPT. - Data mining and modelling

Although LLMs are mostly known for their application to unstructured data, structured data also benefits from their inherent capabilities. LLMs can provide users with a natural language method for querying, analyzing, and extracting information from structured data. Frameworks such as LlamaIndex and Langchain provide analysis and model generation capabilities. When paired with knowledge graphs such as Neo4j and vector databases such as ChromaDB and Pinecone, these frameworks provide powerful analysis and modelling capabilities for many different types of use cases. - Task planning

Recently, there has been significant progress made in using LLMs for task planning via multiple autonomous agents. Multi-agent systems represent the next level of AI-based problem-solving. Intelligent agents can work together to solve more complex problems by breaking down a complex task into simpler steps and assigning each step to an intelligent agent that can be trained with specific expertise. Imagine being able to generate intelligent agents to handle each step in a claim adjudication process, and the agents can learn to do each step with simple training.

Putting it all together

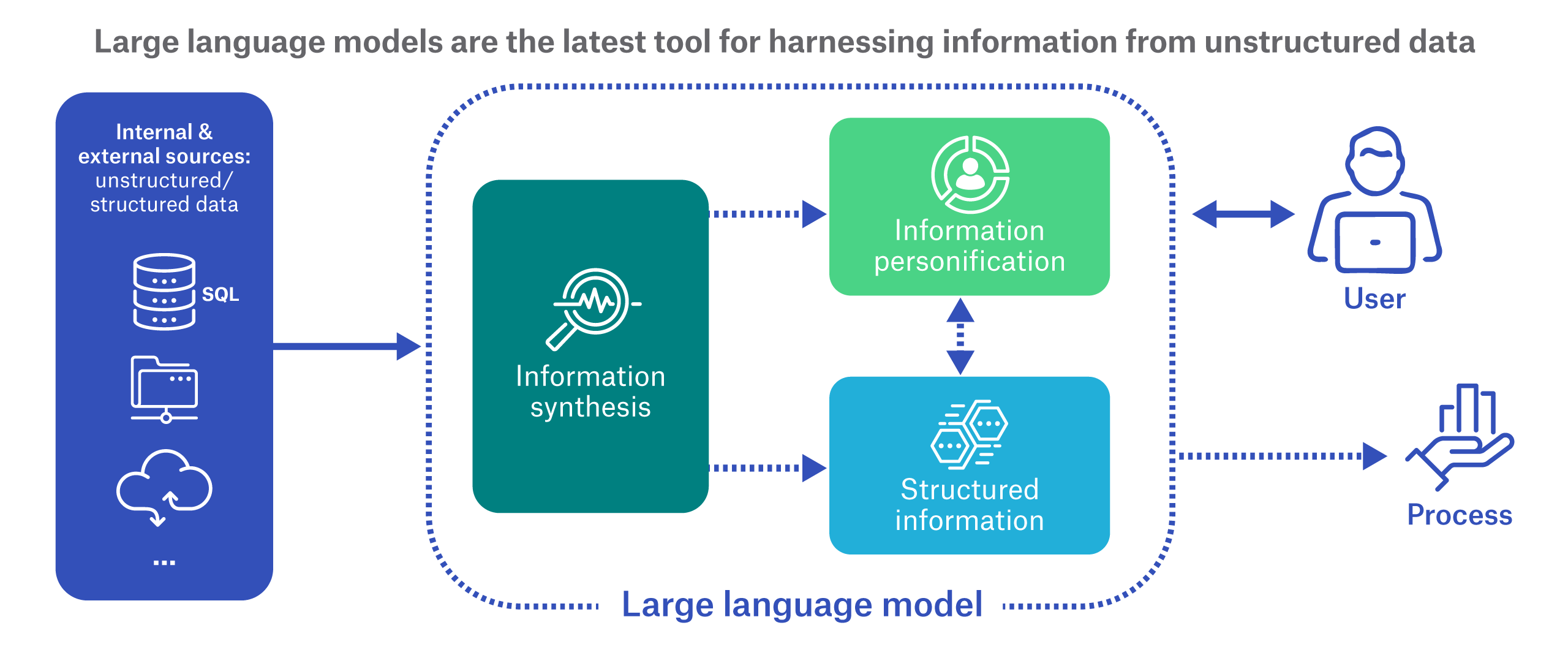

This list of standalone tasks is notable, but it’s just the start. The distinctive power of LLMs lies in two capabilities that can be used independently or together to improve efficiency or remove roadblocks to achieving a particular business strategy or automated process.

- Information synthesis

Multiple sources of unstructured data can be connected and summarized to create actionable information that can be used in machine processing. For example, an APS and underwriting manual could be connected within an LLM and be instructed to deliver summaries of impairments along with underwriting recommendations. - Information personification

Because of our ability to interact with LLMs using natural language, LLMs can also “personify” structured and unstructured data. We could ask the LLM questions about the data where a single answer depends on the LLM’s ability to efficiently interpret, mine, synthesize, and summarize information across multiple distinct data sources. The end result is a seemingly intelligent response from the data as if a person were responding.

These two building blocks offer new opportunities to address specific challenges across a business. Let’s consider use cases in two important insurance functions – underwriting and claims.

Efficient underwriting

Imagine a set of LLMs collectively called “Munich Re LLM,” whose purpose is to maximize information utilization and speed up the underwriting process. How could information synthesis be applied? A simple solution might be to train it to read APS data for the purpose of summarizing impairments. Going further, we could envision a scenario where multiple inputs (APS, application, calculators, underwriting manual, and other documents) are fed into the models. Munich Re LLM might put everything together automatically within the model, synthesize the information, and output an underwriting recommendation for review.

Munich Re LLM might also include an LLM to provide natural language interaction (in other words, information personification). An underwriter could query the model during the underwriting review process by asking questions such as, “When was the patient’s last doctor’s visit?” or “Was there a weight change in the last 12 months? If so, what are the reasons for the loss or gain?”

These queries would relieve the underwriter of having to manually inspect lengthy APS to find the relevant information. They also go beyond simply extracting information from the APS, since the underwriter is asking the model to assess that information. In this case, the underwriter is asking not only whether a BMI has increased or decreased, but also why the change occurred.

In the future, when we are further down the road with LLM training and testing in a life insurance setting, we could even imagine a generative AI usage where an applicant is loaded, an underwriting report is generated, and an offer letter is output. Each step of the process is performed by an intelligent LLM-based agent in a multi-agent pipeline.

Intelligent claims management

The traditional claims process is labor-intensive. Claims information might be accepted by a claims team in a variety of disconnected ways - handwritten forms, voice memos, phone calls, online portals, apps, or chatbots. Manual processes are often involved to review, consolidate, input, triage, and adjudicate the claims.

With an AI-enabled process, once the incoming claims information is digitized, LLM information synthesis and personification can be applied. Using LLMs could facilitate automated summary, review, triage, and adjudication, supported by natural language interaction with the claim data if the claim manager has additional questions.

That’s not to say that the human touch in claims should disappear entirely, but it could make the best use of limited resources. As the model assembles the information and potentially even does triage, the claims manager would be able to ask questions across the assembly of claims information, and with those answers in hand, arrive at a decision much more efficiently.

Huge upside; guardrails required

In our business, we are swimming in huge amounts of unstructured data and some of our processes are manual and time-consuming. Large language models, if properly trained, tested, and controlled, could provide an excellent solution for harnessing valuable information from unstructured data, synthesizing data across multiple sources, and personifying it for easy human consumption. This could mean significant operational efficiency improvements for key functions like underwriting and claims.

However, as insurance professionals, risk mitigation is always top of mind. While this technology is emerging in our industry, controls must be put in place to mitigate risk, such as effective audits, challenger systems, and governance to ensure legislative compliance. At Munich Re, we’re already investing in the expertise and testing to harness LLMs for life and disability insurance. We believe there's immense value here, and we know that understanding the constraints and challenges will allow us to deliver solutions that are truly effective and transformative.